Supporting cybersecurity for research

Sometimes, size matters

I gave a talk a few days ago on why universities need to start paying attention to cybersecurity and research1. Historically we’ve treated how and whether research addresses cybersecurity not just with kid gloves, but mostly with thoughts and prayers. We see how well that’s worked out IRL. However, pending regulations will require any institution receiving over $50 million a year in federal funding to certify that its researchers are complying with a set of very basic, but fundamentally important cybersecurity practices. You know, lunatic fringe things like backing up essential data.

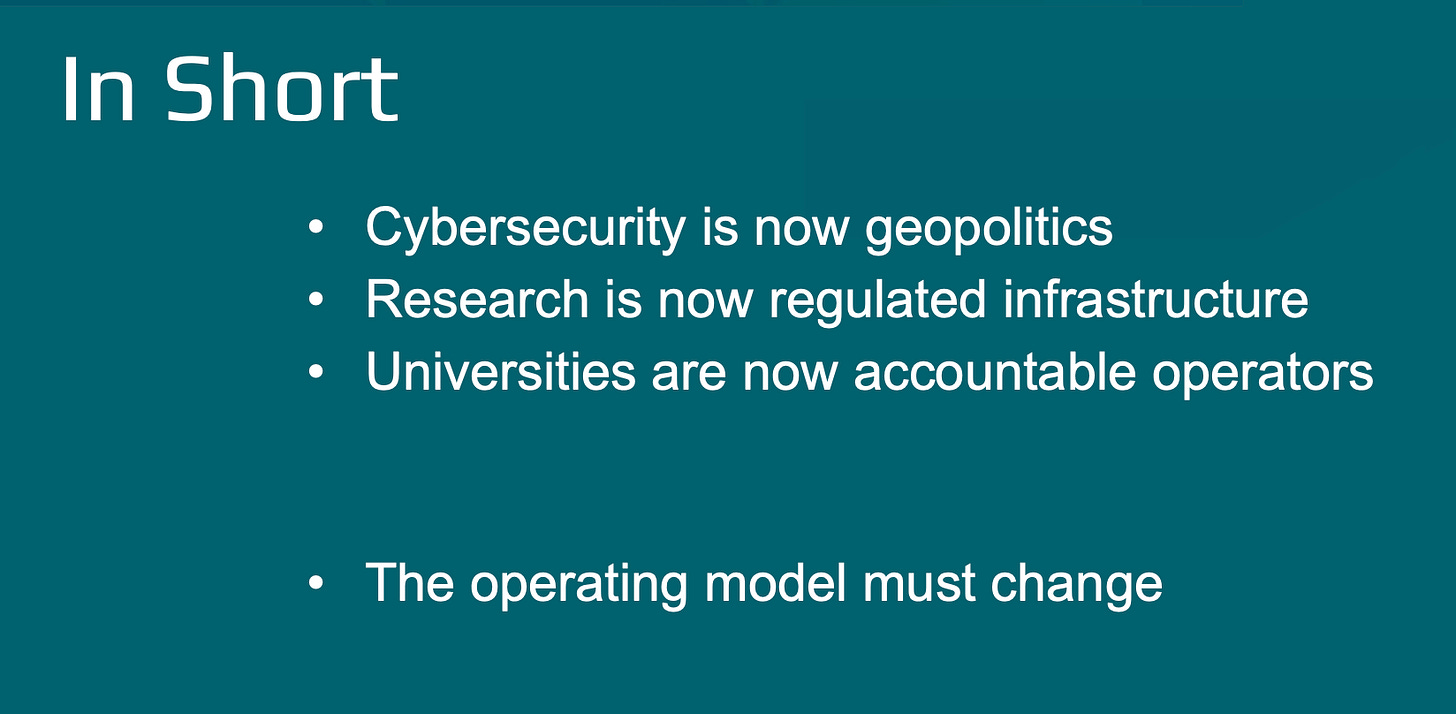

I think my final slide captured this rather well.

It’s that last bullet point “the operating model must change” that is going to give us the most heartburn.

Let me frame this from experience. Picture the typical large research university with a modest central security office of ~30 people. Perhaps four or so dedicated to compliance. There’s undoubtedly a research IT team associated with central IT of 4-8 people. Across campus there are roughly 1500-2000 faculty with active federal awards. The math is pretty brutal - if every faculty member needs to meet with some form of cybersecurity professional, it simply can’t happen using central resources. The problem is not compliance. The problem is scale and structure.

If you’ve read my earlier posting on distributed IT, you might be thinking, “no problem, we’ve got hundreds of IT staff spread across campus, many already focused on supporting research.” Well, maybe - there’s a host of issues to consider here, not the least of which is that every one of those folks is already working a full-time job. If the new requirements of cybersecurity for research were simply a handshake and hearty pat on the back, we might muddle through. But implementing the requirements isn’t that simple, and worse, few of those hundreds of staff are security professionals2. Their jobs are more generally helping make bespoke infrastructure work, not negotiating new and unwanted cybersecurity practices3. And of course, we have a huge bootstrapping problem. Having spent years largely ignoring the cybersecurity posture of most researchers, institutions are now confronting requirements that are both specific and enforceable - and will demand implementation on a large swath of your researchers in a short period of time. This will be challenging. To be sure, some hard expenses will be incurred, but primarily the cost will be in humans available and trained to do the job. This is not a failure of intent or funding alone; it is a structural failure of how universities have chosen to organize cybersecurity.

Which brings me to the core of this post: what does it mean for a security professional to support research? What do they need to know, and how does that differ from “ordinary” or enterprise cybersecurity?

A number of years ago, I was sharing a meal with a friend and colleague and we were reflecting on this question. More specifically, as we surveyed the bulk of our field we were both struck by how ill-prepared the typical security practitioner was to support research. They are often unversed in the vocabulary and tools commonly found among the research community, so it’s no wonder practitioners tend to view it as simply ‘more IT’. If the two realms of enterprise and research were to remain just that, separate realms with only the loosest connection, then there’d be no problem. But we saw this as unlikely - even a decade ago, it was pretty plain that institutions would need to take on cybersecurity within research programs; it really didn’t take any special foresight. One simply needed to look up from the keyboard once in a while.

For many years, the paradigm for IT support for research was the ACI-REF, or Advanced Cyberinfrastructure Research and Education Facilitator. Essentially an ACI-REF is an IT professional dedicated to working with researchers, trained to tailor IT practices to the unique context of research, as well as helping researchers avail themselves of ordinary - usually campus-provided - IT services. The program, originally an NSF-funded effort, was pretty successful. It truly defined a role that has been adopted broadly, even if the name doesn’t always get used. An effective pool of ACI-REFs bridges the entirely customized and local research lab environment with the standardized and homogenized enterprise IT environment. The one fatal flaw in the ACI-REF approach, however, is that it tends to remain a highly bespoke service and is difficult if not impossible to scale.

The question we asked is whether the ACI-REF model could be inflected into someone that bridged cybersecurity with a REF, call it a SEC-REF. Our idea was to build a program where security professionals were introduced to the world of research IT and trained to work with faculty researchers. This was the reverse of how so many security professionals approach the issue, by trying to shoehorn research into enterprise security practices.

After discussing this with NSF, we realized it was a bit much for an initial proposal (though in hindsight, I wish we’d pushed harder) but we did receive funding to develop and deliver an initial set of training workshops4. We held the first workshop, in person, in December of 2019. Of course, our plans were derailed by COVID. The initial workshop ended up being the only one held in person - which was too bad. While switching to an online format allowed far more attendees, the in-person workshop created such a terrific opportunity for people to get to know one another. We had talks by dynamic (and influential) speakers such as Larry Smarr and Frank Würthwein. The esprit de corps and knowledge sharing was impactful. After the online workshops and the grant funding concluded, the community of alumni continued to meet monthly, and eventually evolved into a Research Cybersecurity Interest Group that is still meeting monthly5.

So did we succeed with our goal? Not really - I’d like to think introducing a modest community of cybersecurity practitioners to the nuances of research facilitation and some of the terms of art of research IT was valuable. I still hear from many of the workshop’s alumni that it did help them reorient to the nature of research support. But we did not establish the SEC-REF as a role, nor do I think it would have solved the scale problem I discussed earlier, even if we had done so. The goal of the SEC-REF proposal wasn’t to establish yet another small team, analogous to the ACI-REFs that worked in a campus silo. Rather, we had hoped that by introducing to ‘ordinary’ cybersecurity specialists the communications skills of the ACI-REF, combined with the openness to adaptability research requires, we’d reshape how university cybersecurity operated. While this wouldn’t have solved the scale problem, it was a reasonable step in that direction.

I think this discussion is ripe for revisiting at the moment, as universities begin to face the reality that it is no longer possible to operate as they traditionally have with regards to research and cybersecurity: the operating model must change. But how to do that at scale remains daunting. Especially when combined with the bootstrapping issue mentioned above. It’s likely that many institutions will take the stance that this is fundamentally a problem for researchers to solve. It’s your grant money and your lab, so you’ll need to adapt to modern cybersecurity practices and begin including those costs in your proposals. And that’s not wrong. I know federal agencies are more amenable to the inclusion of cybersecurity costs in proposals than ever before - indeed, we are entering a period where their inclusion is seen as signaling responsibility and maturity in a proposal. Which can only lead to greater likelihood of being funded successfully.

But to leave the issue there - as the researcher’s problem - is both naïve and self-defeating. As the previous bullet point on my slide says, universities are now accountable operators of research infrastructure. Not only do they need researchers to succeed in obtaining funding, but they themselves are now required to certify institutional compliance with a research security program. Thus the failure of a researcher impacts not merely their own research program, but the institutional capacity to receive federal funding. Note that no other element of a research security program has as deep an operational impact in research environments as the cybersecurity component.

Short of hiring hundreds of new cybersecurity professionals, it still strikes me that leveraging the existing distributed IT community is the solution most available to institutions. Having hundreds of experienced IT professionals, many of whom have existing relationships with faculty researchers is simply too convenient to ignore. But galvanizing them as a community, particularly as a community focused on meeting institutional obligations, won’t happen on its own.

In particular, while the forthcoming cybersecurity practices for research strongly embrace the use of a compensating (i.e., alternative) control where a given control might interfere with research, I worry that this flexibility will be misused. Historically, distributed IT support faculty driven requirements; now be required to bring institutional requirements to the faculty environment. This is not entirely novel, but is a reversal of emphasis. For many this is a new position to navigate. That is, in the desire to avoid conflict with researchers, the flexibility of compensating controls may be seen as giving implicit encouragement of broad workarounds rather than narrowly scoped, risk-based exceptions where they may be warranted. Which undermines the spirit if not the letter of the regulations. I’m already seeing this with some of the practitioners I know.

If institutions want to address this, it strikes me that providing some form of training that normalizes institutional practice is required. Training that bridges established enterprise security and risk-based analysis with the situational flexibility required by research. Thus we return to the notion of a SEC-REF, or at least the intention of the original SEC-REF proposal.

Is SEC-REF a model, a training philosophy, a cultural intervention, or merely a historical scaffold? I would argue “why choose?” On one hand it clearly is a model, an inflection of the original ACI-REF model. In practice, and you can see this in the slide decks, we absolutely adopted a training philosophy from the ACI-REF universe. To wit: working with researchers requires pausing, and not showing up with demands and requirements, but rather, open eyes and ears. That is, to first understand risk and workflow from the researcher’s perspective before introducing classic cybersecurity practices. These together lay bare the historical scaffold we were building upon.

However, as we originally conceived it, it most certainly was a cultural intervention. It was our attempt to bring the challenge, energy, and variety of research practice directly into the lived reality of cybersecurity work. I think this is what we were the most excited about on a personal level. Critically, the cultural dimension operates in both directions: it requires the enterprise security professional to recalibrate their relationship to researchers, and it requires the distributed IT staff to see themselves as part of the institutional arm of cybersecurity.

We can look at the agenda for the earlier workshops as a strawman for a SEC-REF training program6.

Working with researchers (framing the research endeavor, facilitation skills)

Introduction and overview of the grant planning and general lifecycle

Introduction and overview of national and international resources (e.g., ACCESS - Advanced Cyberinfrastructure Coordination Ecosystem: Services & Support, or OSG - Open Science Grid)

Introduction to the current practice tool set (e.g., Globus, perfSonar, and the evolution away from science DMZs)

IAM in modern, highly-collaborative research projects (CILogon, ACCESS, GEANT, eduGAIN…)

Introduction to ACI-REF and SEC-REF as an extension

Research cybersecurity expectations - specifically the NSF 14 control set

While subsequent days could be focused on a detailed examination of the NSF 14 controls and when and how to extend enterprise services into a lab environment to address them. For example, what it means for enterprises offering MFA services to support phishing-resistant MFA on Linux based infrastructure. Follow on training could be built around use-cases drawn from the experience of implementing the research cybersecurity program in campus labs.

I suspect what’s missing is some institutional framing of research security - perhaps the entire training program needs periodic updates from the office of the VP or VC of research. Explaining why this isn’t business as usual but essential for the institution’s research mission. Ultimately the goal here isn’t just to begin and end with a handful of security controls but to nudge both communities, central and distributed, to mutual institutional reorientation.

The path forward is not defined by specific titles or organizational charts, but by the fundamental recognition that research cybersecurity has matured into a core institutional function. Compliance mandates have simply accelerated an inevitable reality: the era of treating security as a researcher’s individual burden or an artisanal service delivered at the margins is over. Success now requires a structural alignment between central security, distributed IT, and research leadership to build a shared framework for risk and flexibility. The institutions that lead will be those that invest in ‘translational’ talent—professionals who can bridge disparate domains to normalize security as an intrinsic part of the research enterprise. Ultimately, the operating model must evolve because the legacy approach is no longer compatible with the integrated, data-driven nature of modern global research.

Information on the full series is available at https://internet2.edu/cloud/cloud-learning-and-skills-sessions/class-events-schedule/secure-research-environments/.

Please don’t make me defend cybersecurity as a profession requiring professionals. Everyone in IT has some cybersecurity responsibilities, but that’s not the same. Everyone with a PhD is a doctor, but you wouldn’t want most of them operating on you.

Again, this is a broad bush I’m painting with here. I know plenty of distributed IT folks who do pressure researchers into adopting institutionally mandated practices, but that is rarely their swimlane.

The slides are available at https://drive.google.com/file/d/1AyYGfJZGifO81Nr0M7M_hL0HE1-Ok0h_/view?usp=drive_link.

You can reach out to them at rcs-coordinators@carcc.org.

I’ve updated this a bit to reflect current initiatives.

Thanks, Mike! I’ve made this required reading for my team

Excellent article!